Spectral Instability in Attention Matrices as a Leading Indicator of Transformer Rule Violations

Code and data: here

Why a Controlled Setting

It is difficult to explain why a transformer makes an error. We are all developing a broad sense for when it seems more likely, and probably have a few tricks in markdown files to try and help, but we really lack any sort of analytic way of talking about it. Context pollution gets thrown around, and many people look at benchmarks with a few tweaks but I have yet to see research on the fundamental mechanism that causes an error. With any other statistical model you can understand, you can examine contributions to the residual, even in a lot of image neural networks you can see what about the image caused it to be misclassified. In mathematics when you really want to understand something, you usually create a toy model, make wild simplifications, making huge asymmetric trades of accuracy for legibility. That is what I try and do here. The toy model I hope captures a few features correctly. Concept clustering, and a firm definition for failure. Even though the model and task are synthetic, it gives us a very specific lens to hunt for emergent behaviors within a transformer we might exploit for further work to expand these ideas. The core idea here is that if the insights fail here, they would never work, but if they work here, they might have a chance to work in a real world setting.

The specific question is whether spectral properties of the attention matrices can predict when a transformer is about to fail, before the failure shows up in the output. And if so, how far in advance. I have a bag of tricks from random matrix theory and functional analysis, and the question is whether any of them track with model failure when you look at the geometry of attention heads over time. This is not a finished result and I want to be careful about that. Spectral methods for hallucination detection are a hot space right now and I have no interest in adding to the pile of overclaimed papers. I have revised the experimental design more times than I care to admit, and I am posting it now because I think the reasoning is worth showing even though the story is not done. The next piece is Phi-3, and I want the controlled-setting work visible before I get there.

Why DCSBM Graphs

A degree-corrected stochastic block model (DCSBM) defines communities of vertices with dense within-community edges and sparse between-community edges. Edge probabilities follow $$P_{ij} = \theta_i \, \theta_j \, \omega_{b_i, b_j}$$ where \(\omega_{ab} = p_{\text{in}}\) if \(a = b\), \(\omega_{ab} = p_{\text{out}}\) otherwise, and \(\theta_i\) are degree-correction parameters sampled from a Zipf distribution. This is a natural model of concept adjacency. Semantically related tokens are more likely to co-occur, and the block structure captures the clustering of concepts into topics or domains. The degree correction adds realistic heterogeneity, some vertices are hubs, others are peripheral, which mirrors the power-law frequency distributions you see everywhere in natural language. Training a transformer to predict next tokens on random walks over this graph forces it to learn community structure from sequential observations. It has to internalize which vertices tend to follow which, and how transition probabilities shift depending on the current block. Same fundamental challenge as a language model learning that certain tokens are likely in certain contexts, just legible.

The trick is the block jumper vertices. Each jumper carries a delayed rule: encountering it at step \(t\) means the walk must reach a specific target community at step \(t + r\). By varying \(r\) across $$r \in \left\{ \lfloor s \cdot w \rceil \;\middle|\; s \in \{0.5,\, 0.7,\, 0.9,\, 1.0,\, 1.1,\, 1.3,\, 1.5,\, 2.0\} \right\}$$ where \(w\) is the context window, I control the difficulty. Short rules (\(r = 32 = 0.5w\)) are easy because all relevant information is nearby. Long rules (\(r = 128 = 2w\)) are hard because the model has to hold a constraint that has long since left the attention window. Think of it as a document that states a fact on page one that constrains what can be said on page three. The model saw the constraint, but can it hold onto it long enough to act on it correctly? Every rule event has a known outcome (followed or violated), a known rule length, and a fully observable attention state at every intermediate step. That is the whole point of the setup.

Experimental Setup

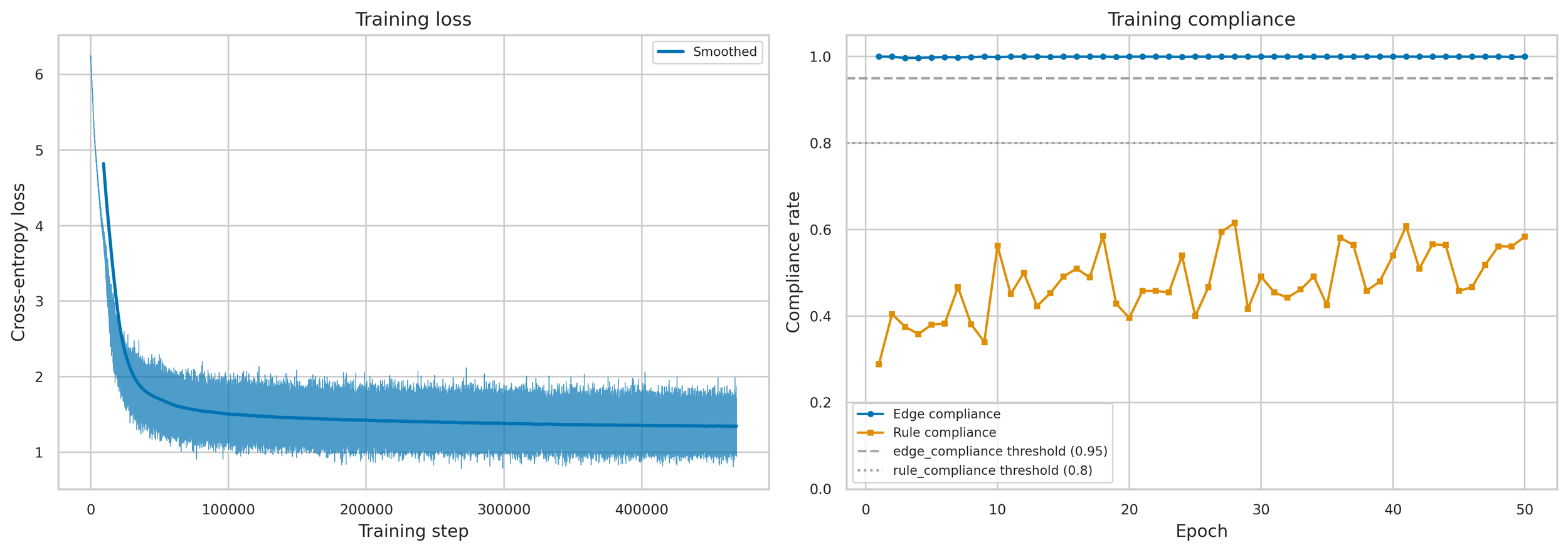

The anchor experiment uses a 4-layer, 1-head transformer with \(d_{\text{model}} = 128\) and a context window of \(w = 64\) tokens. The graph has \(n = 500\) vertices in \(K = 4\) blocks, with \(p_{\text{in}} = 0.25\) and \(p_{\text{out}} = 0.03\). Training runs for 50 epochs on 200,000 walks with next-token prediction, gated by a sufficiency criterion requiring edge compliance above 0.95 and rule compliance above 0.80 before proceeding to evaluation.

At every generation step during evaluation, I compute the full SVD of both the \(QK^T\) attention matrix (the raw attention scores) and the \(A \cdot V \cdot W_O\) matrix (the attention-value-weighted output, which captures the OV circuit). From these I extract five spectral metrics: Stable rank: $$\text{srank}(M) = \frac{\|M\|_F^2}{\|M\|_2^2} = \frac{\sum_i \sigma_i^2}{\sigma_1^2}$$ Effective dimensionality of the attention pattern. A matrix with one dominant singular value has stable rank near 1, many comparable singular values push it higher. Grassmannian distance, the geodesic on the Grassmannian \(\text{Gr}(k, n)\) between the top-\(k\) left singular subspaces at consecutive timesteps: $$d_G(U_t, U_{t-1}) = \left\| \arccos\left(\sigma_i(U_t^T U_{t-1})\right) \right\|_2$$ How fast the attention subspace rotates from one step to the next. Spectral entropy:** $$H = -\sum_i p_i \log p_i \quad \text{where} \quad p_i = \frac{\sigma_i}{\sum_j \sigma_j}$$ How uniformly the singular values are distributed. I measure all of these on both \(QK^T\) and AVWO, giving complementary views into what the model is attending to versus what information is actually being written. The evaluation produces 22,222 sequences with 211,729 total rule events across all 8 rule lengths.

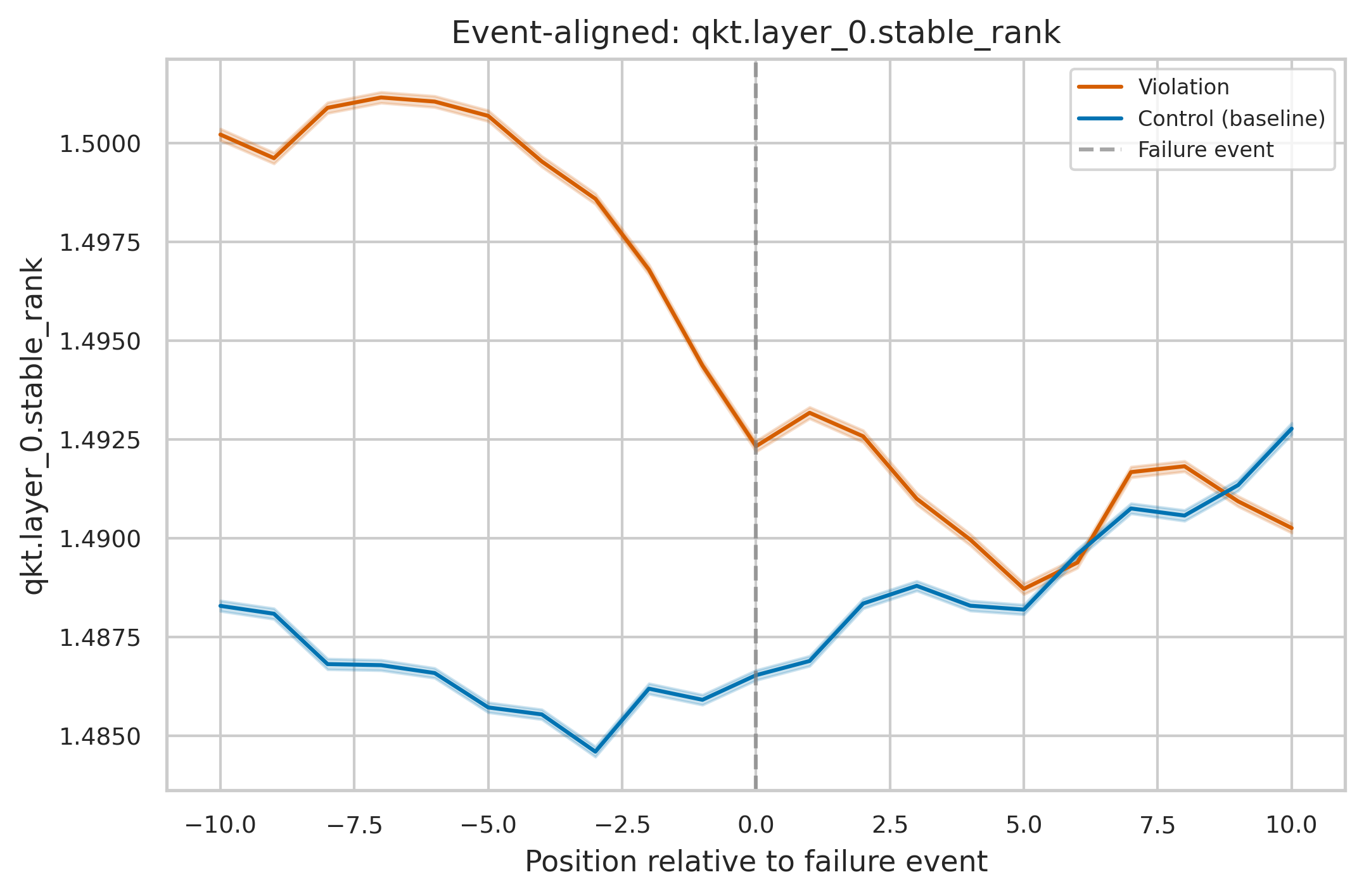

Result 1: Spectral Instability Precedes Violations

Before I get into AUROC numbers I need to be really careful about setting expectations, because the effect sizes here are small enough that I worried for a while I was chasing noise. The mean difference between violation and control spectral traces is often less than 1% of the baseline value. If you looked at a single token's stable rank and tried to make a call, you could not do it. We are nowhere near a threshold you could set on any one metric to flag failures in real time. What I do find is that this tiny shift is remarkably consistent. Across tens of thousands of events it accumulates into a reliable rank-ordering between violation and control populations, which is what AUROC measures. This is a population-level statistical signal. Not a per-token diagnostic. For each metric and rule length, I compute AUROC at every lookback distance \(j\) from 1 to \(r\) steps before the rule resolution: $$\text{AUROC}(j) = P\!\left(X_{\text{violated}}^{(t-j)} > X_{\text{followed}}^{(t-j)}\right)$$ The predictive horizon is the maximum \(j\) where \(\text{AUROC}(j) > 0.75\).

Within a single rule-length regime, AUROC ranges from 0.75 to 0.98, and the predictive horizons scale with rule length. The one that surprised me was \(r = 64\) (exactly the window) where AVWO grassmannian distance hits 0.987 AUROC with a horizon spanning the full 64 steps the signal extends all the way back to when the rule was first imposed. At \(r = 45\), \(QK^T\) stable rank reaches 0.982 with a 27-step horizon. At \(r = 128\) (\(2w\)), \(QK^T\) grassmannian distance reaches 0.946 with a 93-step horizon. Even the weakest regime, \(r = 32\), still shows 0.797 with a 17-step horizon. The attention subspace becomes measurably unstable well before the model emits the incorrect token. But "measurably" here means detectable with enough data, not visible on any single sequence.

Event-aligned \(QK^T\) stable rank. The two things here that are convincing. Provided of course we ignore the four significant digits on the y axis for a moment, the steepness of the descent on the violating walk versus relative steadiness of the valid walk. And second, that with a large enough sample, the confidence intervals around these two results do not overlap. This gives me hope that there is something subtle and mechanistic I can hunt for in Phi3.

Result 2: Pooling Across Rule Lengths Destroys the Signal

This is the result I keep coming back to. It took me a while to understand what was happening, and once I did I think it explains a lot about why prior work in this area has been underwhelming. Same metrics, same data, but pooled across all 8 rule lengths. Best single metric AUROC drops to about 0.70. I then spent a frustrating week testing whether accumulating the signal over time could rescue it. Rolling mean (window = 10) of stable rank: 0.700. Rolling variance, CUSUM, EWMA deviation: 0.55 to 0.63. 5-metric logistic regression: 0.711. Nothing breaks 0.72 when pooled. The cumulative metrics do not rescue it. Multi-metric composites add negligible lift. Once I saw it the reason was obvious. A model tracking a 8-step dependency just sits at a different baseline stable rank than one tracking a 32-step dependency. Those baselines vary more across rule lengths than the violation-vs-control shift varies within a single rule length. So when you pool everything together, you are averaging over what are really different operating regimes, and the regime differences swamp the signal you actually care about. I think this is why prior approaches to spectral hallucination detection have not gone anywhere. If you measure attention-based features across a diverse set of inputs and correlate with output quality, you are implicitly pooling across regimes. The signal is there within each one, it just cancels out when you mix them.

Result 3: Per-Regime Composites Recover Near-Perfect Discrimination

Conditioning on rule length and combining metrics changes the picture entirely. I fit a logistic regression on 5 raw spectral metrics (\(QK^T\) stable rank, \(QK^T\) Grassmannian distance, \(QK^T\) spectral entropy, AVWO stable rank, AVWO Grassmannian distance) within each rule-length stratum at a lookback of 5 steps:

| Rule length | Composite AUROC | Best single metric |

| -------------------- | --------------- | ------------------------------ |

| \(r = 32\) (\(0.5w\)) | 0.79 | avwo .gd at 0.77 |

| \(r = 45\) (\(0.7w\)) | 0.96 | qkt .sr at 0.88 |

| \(r = 58\) (\(0.9w\)) | 0.86 | avwo .sr at 0.72 |

| \(r = 64\) (\(1.0w\)) | 0.95 | avwo .sr at 0.85 |

| \(r = 70\) (\(1.1w\)) | 1.00 | qkt .gd at 0.92 |

| \(r = 83\) (\(1.3w\)) | 0.78 | qkt .sr at 0.67 |

| \(r = 96\) (\(1.5w\)) | 0.94 | qkt .sr at 0.93 |

| \(r = 128\) (\(2.0w\)) | 0.89 | qkt .gd at 0.86 |

Seven of eight regimes exceed 0.86. The pattern holds across lookbacks from 1 to 20 steps.

No single metric dominates and this is the part I find most interesting. \(QK^T\) stable rank carries \(r = 45\) and \(r = 96\). \(QK^T\) grassmannian distance carries \(r = 70\) and \(r = 128\). AVWO stable rank carries \(r = 58\) and \(r = 64\). AVWO grassmannian distance is the strongest contributor at \(r = 32\) where everything else is mediocre. The composite works because these metrics are genuinely complementary, each one is sensitive to a different failure geometry. The one regime that gave me trouble is \(r = 83\) (\(1.3w\)). This is where the jumper encounter falls just inside the context window but the resolution is well outside it. The model has partial information about the constraint, and the spectral signature of that partial knowledge is the hardest to distinguish from normal operation. I actually find this informative on its own, it identifies the exact boundary condition where monitoring is weakest.

What I am Taking From This

The three-layer finding, strong per-\(r\), weak pooled, strong composite per-\(r\), points to something specific about how you would build a practical monitor. The detector has to calibrate to the current operating regime. Two ideas follow: Self-calibrating change-point detectors. Things like CUSUM and BOCPD that establish a local baseline from the sequence's own history and flag deviations. They do not need to know the regime explicitly. In my data, CUSUM on AVWO stable rank achieves 0.967 AUROC at \(r = 70\) without any knowledge of \(r\), it just detects "something changed relative to what came before." The catch is inconsistency, it works beautifully at some rule lengths and falls apart at others. Explicit stratification. If you know the task structure (document length, dependency span, query type), you can learn per-stratum decision boundaries. More engineerable but requires calibration for each task. On computational cost, since this matters for whether any of this is practical: stable rank only requires \(\|M\|_F\) plus \(\sigma_1(M)\), which is a few power iterations, not a full SVD. Grassmannian distance needs the top-\(k\) singular vectors from a truncated or randomized SVD. You could run these cheaply at inference time and reserve full spectral analysis for regions that get flagged.

What Next?

I want to be clear about how far this is from anything useful in production. This is a toy model. The transformer is tiny. The task is synthetic. Real language involves many overlapping dependencies of different lengths all operating at once, not one clean rule resolving at a known step. The regime-conditioning result could be an artifact of the simplicity of the setup, where there is exactly one rule length per event and nothing else competing for the model's attention. We do not know yet. What the result gives me is direction. Which spectral properties are worth measuring, that regime-dependence is a specific threat to detection quality that needs to be addressed head on, and that complementary metrics on different circuits (QKT vs AVWO) contribute genuinely different information. These are design principles for the next experiment, not conclusions about production systems. The next step is Phi-3-mini, 3.8B parameters, 32 heads, 32 layers, on tasks where I can evaluate output quality against ground truth. Document QA with controlled evidence distance is one candidate where the distance from question to supporting evidence plays a role analogous to \(r\). Summarization faithfulness is another. The question I really want to answer is whether these spectral signatures show up at all in a model that is 30,000x larger operating on natural language rather than graph walks. Phi-3-mini also opens up a measurement I could not do here: inter-head geometry. With 32 attention heads per layer, I can compute the Grassmannian distance between head \(i\) and head \(j\)'s \(QK^T\) subspaces at the same token. This measures whether heads that normally agree are diverging. In the DCSBM experiment, the composite worked because different metrics on a single head captured different failure geometries. With multiple heads that specialize in different dependency types, the pairwise relationships between their subspaces might carry richer information about the model's internal state than any single-head metric. That is a hypothesis, not a result. Testing it is the next piece of work.

The full codebase, data, and analysis scripts are here